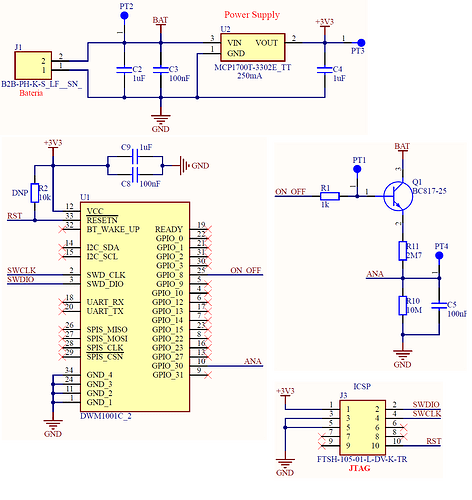

I am trying to estimate for how long the tag with a DWM1001 will work. I’m following the standard configuration, with bridge nodes attached to Raspberry Pi 3 boards for gateways, and both anchors and gateways connected to a power outlet. The tag is a board with DWM1001 powered by 3000 mAh Li-Ion batteries, connected to 2 sub-circuits:

- voltage regulator circuit with output of 3.3 V for power

- voltage divider circuit for battery reading connected to pins 10 (GPIO_30) and 25 (GPIO_8): pin 10 reads voltage directly from battery and pin 25 connects to a transistor that enables this reading.

It has user application code, where it basically waits for the DWM_EVT_LOC_READY event and uses it to transmit the latest pin 10 reading to the gateway. It also enables and reads pin 10 from time to time (for the tests the new battery reading happens every minute, which I understand has an effect on the battery consumption, but it is already considered in the measurement shared below).

Tag configuration:

• Stationary dectection: off (but it keeps turning back on, as I see from the web and phone apps)

• Responsive mode: off

• Bluetooth: on

• Low power mode: on.

• Update rate: 1 Hz (for both stationary and moving, since I am not able to turn it off).

This tag (and its sub-circuits) work, have been tested on a breadboard before the PCB version and we can see the normal operation using both web app, the phone app and MQTT.

We have 3 problems now estimating system longevity for the same battery:

- Different battery estimations from different ways of estimating it and different measuring equipment;

- No estimations match actual longevity;

- Batteries are being consumed until undervoltage when I let it running.

Going into a little more detail:

1 and 2. I have tried measuring current several ways. First, directly. I cut the wire that connects to the negative part of the battery and connected each end to a multimeter. Depending on the multimeter I get a different reading:

• Minipa ET-1507B: steady around 3.22 mA, but averages a little higher (since it has variations depending on what is happening, like turning on, ranging, etc);

• Fluke 117: does not turn the tag on, or has current below mA, which seems to be the bottom of scale;

• Tektronix DMM4050: steady around 6.8 mA, averages around 7 mA.

From these readings, the estimated longevity based on my battery should be:

• Minipa ET-1507B: ~750 hours (considering 4 mA);

• Fluke 117: not possible to estimate;

• Tektronix DMM4050: ~428.5 hours.

When turning the tag on with the battery fully charged, it works for around ~20-24 hours straight, which suggests a current consumption of 125-150 mA, way higher than the ones I measured.

At some point trying to figure out where to place the cables and what options to choose using the Tektronix DMM4050, I accidentally put on the 10A scale. The 7 mA reading was on mA scale, with cables connected to the slot with 400 mA marking in red in front of the multimeter and the slot above it (the multimeter, for reference).

When I put on the 10A scale, it measured the current around 113-115 mA, closer to what seems to be the right answer. But someone pointed out that the red cable was connected to the 400 mA, and it should go in the other slot, the one with the 10A marking.

After I placing the cable in what should be the right slot, it when back to reading around 6.8~7 mA. ChatGPT explained that different shunts, with different resistances, are used for different scales.

So I changed my approach to reading the voltage of a known resistance. I connected the wires to each end of a 0.3 ohm resistor (I tried before with a 47 ohm resistor, but it was reading ~1.3 V, which meant the DWM1001 was receiving only ~2.7 V and was not working, so I got the next lower resistor I had), and read voltage connected to both ends:

• Minipa ET-1507B: shows 1-2 mV at tag startup, but steadily at 0 mV after; the tag is moving in the web app, suggesting the multimeter simply can measure between 1 mV and 0 mV;

• Fluke 117: tag turns on now, reading steadily at 1 mV, which is the bottom of scale;

• Tektronix DMM4050: average 0.88 mV (because of some variation spikes from operation, but steady at ~0.8 mV).

From Ohm’s laws, the estimated current consumption and longevity based on resistance and battery should be:

• Minipa ET-1507B: not possible to estimate, since readings are steady at 0 mV;

• Fluke 117: 1 mV / 0.3 ohm = 3.33 mA => ~900 hours;

• Tektronix DMM4050: 0.88 mV / 0.3 ohm = 2.93 mA => ~1024 hours.

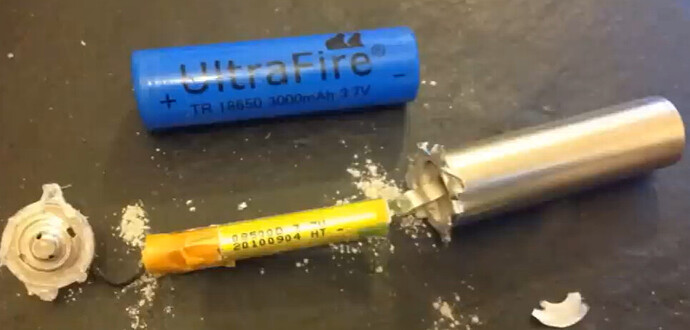

Again, it lasted approximately 24 hours, with the bonus of me coming back to a battery at 1.2 V and not charging anymore.

Which brings us to problem 3: the device does not stop consuming after ~2.8V, when it stops working.

Should the DWM1001C not stop drawing current since it does not work with less than that? Or is it because the other components, like the LDO, do not stop? Can I only prevent that from happening through external circuitry?