I am evaluating DWM1000 in SS and DS TWR examples. Observed that double-sided TWR gives narrower error distribution than single-sided due to elimination of clock speed difference from equation.

However I’ve noticed that SS TWR uses carrier integrator value to adjust the calculation of distance according to XTAL offset between communication parties . The same code of calling for dwt_readcarrierintegrator() is used int XTAL Trim example. So I’ve decided to give it a try and added few lines of code to trim the XTAL on the fly in SS TWR example (whenever it goes over 1.75 ppm).

// CONFIG :

static dwt_config_t config = {

2, /* Channel number. */

DWT_PRF_64M, /* Pulse repetition frequency. */

DWT_PLEN_128, /* Preamble length. Used in TX only. */

DWT_PAC8, /* Preamble acquisition chunk size. Used in RX only. */

9, /* TX preamble code. Used in TX only. */

9, /* RX preamble code. Used in RX only. */

0, /* 0 to use standard SFD, 1 to use non-standard SFD. */

DWT_BR_6M8, /* Data rate. */

DWT_PHRMODE_STD, /* PHY header mode STANDARD (127 MAC symbols). */

(129 + 8 - 8) /* SFD timeout (preamble length + 1 + SFD length - PAC size). Used in RX only. */

};

// RESPONSE PROCESSING :

/* Read carrier integrator value and calculate clock offset ratio. See NOTE 11 below. */

xtalOffset_ppm = dwt_readcarrierintegrator() * (FREQ_OFFSET_MULTIPLIER * HERTZ_TO_PPM_MULTIPLIER_CHAN_2);

clockOffsetRatio = xtalOffset_ppm * 0.000001f;

/* Get timestamps embedded in response message. */

resp_msg_get_ts(&rx_buffer[RESP_MSG_POLL_RX_TS_IDX], &poll_rx_ts);

resp_msg_get_ts(&rx_buffer[RESP_MSG_RESP_TX_TS_IDX], &resp_tx_ts);

/* Compute time of flight and distance, using clock offset ratio to correct for differing local and remote clock rates */

rtd_init = resp_rx_ts - poll_tx_ts;

rtd_resp = resp_tx_ts - poll_rx_ts;

tof = ((rtd_init - rtd_resp * (1 - clockOffsetRatio)) / 2.0) * DWT_TIME_UNITS;

distance = tof * SPEED_OF_LIGHT;

// crystal trimming

if(fabs(xtalOffset_ppm) > TARGET_XTAL_OFFSET_VALUE_PPM_MAX)

{

uCurrentTrim_val -= 0.65 * AVG_TRIM_PER_PPM * xtalOffset_ppm ;

uCurrentTrim_val &= FS_XTALT_MASK;

/* Configure new Crystal Offset value */

dwt_setxtaltrim(uCurrentTrim_val);

sprintf(msg_str, "XTALTRIM : %d\r\n", uCurrentTrim_val);

Serial_WriteString(msg_str);

}

/* Display computed distance on UART. */

sprintf(msg_str, "DIST %3.2f m, XTAL %2.2f PPM, SQ #:%d\r\n", distance, xtalOffset_ppm, frame_seq_nb);

Serial_WriteString(msg_str);

After few experiments I did some captures when crystal was trimmed at 0.20…080 ppm, and in another run where it settled at 1.2…1.5 ppm

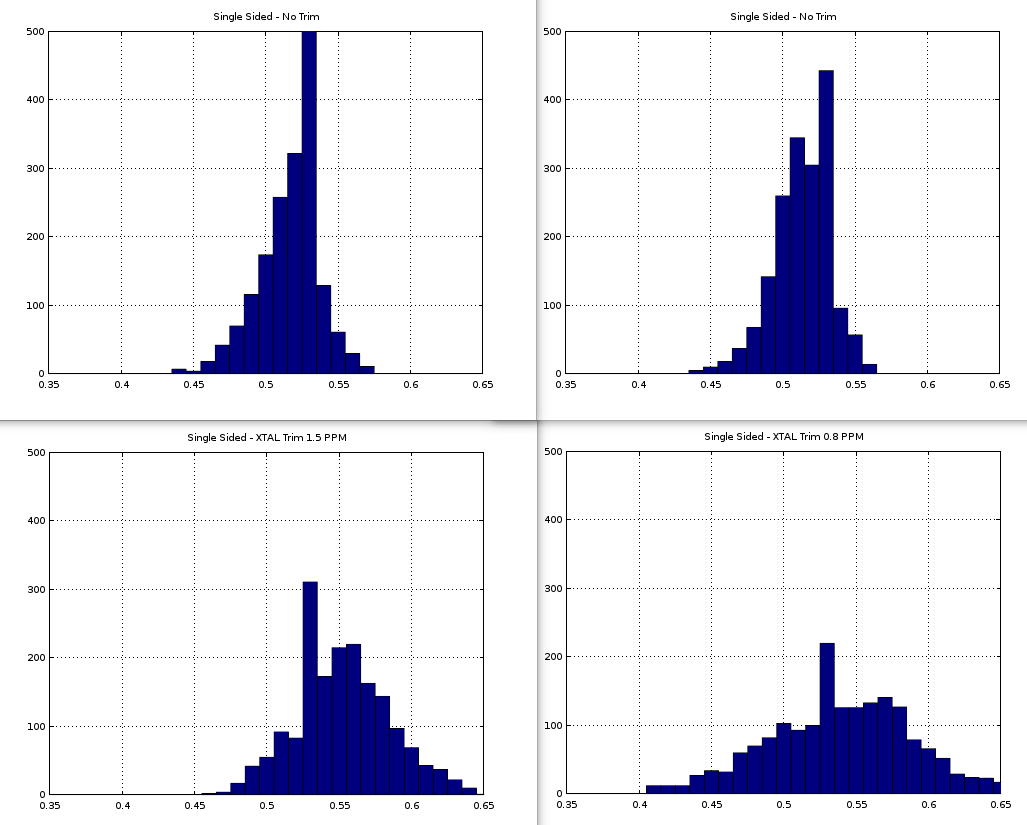

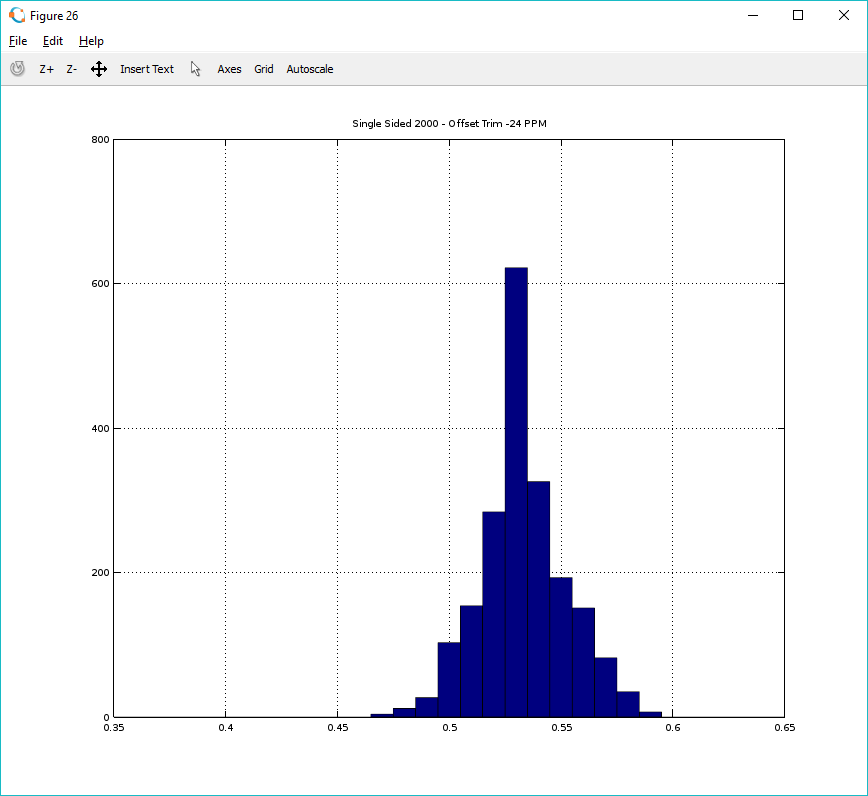

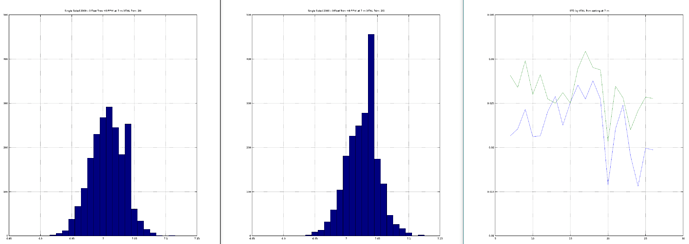

To my big surprise, the error distribution became twice wider. The better the trim actually - the worse result I’ve got. These are histograms of measurement error distribution 1800 measurements each (distance is fixed and identical in all cases - around 0.55 meters). All measurements performed on the same setup.

Two charts at the top - typical SS TWR results I get without XTAL trimming. In this case the xtalOffset_ppm usually sits near -3 ppm.

Two charts at the bottom are measurement distributions with added crystal trim code block (lines 222 – 232). Technically trimming block executes only once or twice at startup, then the value settles in a range bellow TARGET_XTAL_OFFSET_VALUE_PPM_MAX = 1.75. I am just trying to say that while capturing data for two histograms at the bottom - there were no additional trimming performed in the process (no calls to dwt_setxtaltrim(), what can technically shift the timestamping).