hi,

In APS011(sources of error in dw1000 based two-way ranging(TWR)) It said that receiving timestamp is related to received signal level(RSL) but didn’t say why so? Could anybody tell me the reason why RSL affects receiving timestamp in dw1000? (In our project, we try to compensate ranging error sources as much as possible to achieve desired accurary.)

By the way, I know ranging accuracy is related to relative antenna orientation too, does this have any relationship to RSL?

Hi, @DecaLeo

thanks for your reply, that paper helps a lot. But it’s just an experimental report which doesn’t answer my question, in short, what’s the factor in dw1000’s implementation that causes timestamp inaccuracy since theoretically RSL and timestamp are irrelevant?

regards

The DW1000 internally sets a threshold based on the channel noise level. When the signal crosses this threshold the leading edge is considered to be detected and the time for that edge is calculated.

If the leading edge of the signal was infinitely steep then the detected time would be completely independent of signal level. But due to the real world not letting you do the impossible that leading edge is going to have a slope to it. Since the signal source is the same for all receive levels the time taken for the signal to ramp up from nothing to its peak will be the same.

For the same environment the noise level and so the detection threshold will be the same for all receive levels.

But for a weak signal the peak will by definition be lower. If the signal takes the same amount of time to reach a lower maximum then the time taken to reach a common detection threshold must be longer for a weaker signal than for a stronger signal.

Antenna orientation had two possible routes to impact the measured distance; firstly the gain pattern will impact the signal strength, secondly the antenna construction will result in the phase centre not actually being the physical centre of the antenna. If nothing else in some orientations the signal must pass through the PCB, radio signals travel slower in FR4 than in air.

Hi, @AndyA

thanks for your careful reply, now I have better understanding of what happened in dw1000, from what you said, can I conclude that increasing RSL(increase TX power, line of sight, …) will improve timestamp accuracy? But this seems to be inconsistent with the result in paper fig-3.

Besides, does dw1000 provide some statistics of the quality of LDE detection result?

regards

Line of sight will always improve accuracy

More power will help at longer ranges, at short distances with direct line of sight more power won’t make a significant difference.

What fig3 in that paper shows is that you need to compensate for receive power level. At higher power levels the points are more tightly grouped together. That implies that once you correct for the average error at a given power level you will get better results for stronger signals.

Yes, the DW1000 gives a number of registers that can be used to access the quality of the detection, their application notes and device manual give details of these, although I’ve generally only found a weak correlation between quality and the reported values.

You can pull the full channel impulse response from the chip and perform your own leading edge detection, that has the potential to improve the results significantly but is slow and so would significantly hamper the available update rate.

Hi, @AndyA

I’m interested in this part and will investigate how to improve detection result later.

Thank you so much for your help and patience.

regards

(After looking through the Decawave forum, I haven’t seen this answered)

@AndyA I’m working with the raw CIR trying to process and adapt to NLOS environments the LDE threshold. I’m doing so to set the DW1000 in a more proper configuration to detect precisely the timestamp (as Decawave explained in the APP06_Part2) but lowering this threshold does not seem to have that much impact in improving NLOS scenarios.

What I’ve done so far is change the LDE_CFG1 register (I moved the NTM from 2 to max to check how CIR changed, and the same with PMUL). Then, once this register was changed, I performed a reception of a bunch of messages which I was blocking the LOS with my body. After doing so, I plotted the values.

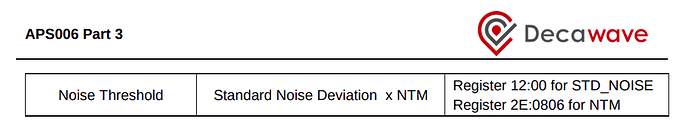

Moreover, I wonder if you know how the LDE threshold works. It seems like from RX to RX LDE_THRESHOLD changes (not the same as the reported STD_NOISE) and can’t understand why.

I’m testing and, from this:

I consider that if my DW1000 reports a noise of STD_NOISE=40 I expect the limit to be 40*13 = 520 but, for a reception with the DW1000, the reported threshold (taken from LDE_THRESH register) is at 605. It seems like these thresholds can be never predicted and vary a lot. Here you can see some values I sampled:

1: STD_NOISE=44 NTM=13 LDE_THRESH(DW1000): 696 LDE_THRESH(FROM APP.NOTE FORMULA): 572

2: STD_NOISE=36 NTM=13 LDE_THRESH(DW1000): 542 LDE_THRESH(FROM APP.NOTE FORMULA): 468

3: STD_NOISE=44 NTM=13 LDE_THRESH(DW1000): 655 (same STD_NOISE as 1: but still different threshold?) LDE_THRESH(FROM APP.NOTE FORMULA): 572

4: STD_NOISE=40 NTM=13 LDE_THRESH(DW1000): 597 LDE_THRESH(FROM APP.NOTE FORMULA): 520

@DecaLeo maybe this has been answered in a technical document or you guys have a published paper explaining the process to changing/understanding this. It would be really helpful if you shared it ![]()

Thanks!

I’ve asked a similar question before and not had an answer. The relationship between the register values, the measured noise and the threshold used is not clear and I suspect will remain that way.