Are you allowed to have infrastructure or must this all be done by the swarm itself, no outside devices?

What you are asking for is trivial with infrastructure (an array of anchors) and likely impossible without it.

You have an O(N^2) system, there are N-1 links for each node to the others. For 200 nodes, that’s about 20,000 range distances. That is a large number!

I think you mean occlusion more than interference. The human body blocks all UWB signals. How good your system is will depend on how you tag the humans. If it is on top of their head (like in a hat or helmet), it will work quite well. If it is in their pocket, or a name badge on their chest, it won’t work well. That’s the nature of the physics of the GHz UWB signal.

For devices that spend most of their time listening, battery usage is very high. Whether the battery is an issue will depend on how big a battery you can tolerate. For a DW1000 receiving for 8 hours, that’s about 4 watt hours, or about half an 18650 cell. Maybe a 14500 cell does it. DW3000 system would be about 65% less. Battery is not your main issue here, though.

The max packet size is 1024 bytes, net about 1000 bytes after headers and frame check. You will need 5 such packets to transmit 4800 bytes from a node.

Each 1024 byte packet takes about 1300 us each (assuming a short preamble). You need some packet gap, say 100 us minimum. So 4800 bytes will cost you about 7 ms. Assuming you find some way to self organize slots (a challenge), you can have 142 such nodes to 100% fill the air time. If you use aloha, it will be a train wreck and maybe workable at 30 to 40 nodes.

I think you missed a byte to bits conversion in there. Your system is heavily oversubscribed.

Yes, but you have only moved the problem to somewhere else.

At 200 nodes sharing about 10 KB per second, you have 2 MB being sent around, so a network capable of 4 MB would be a target, or 40 Mbps in bit rate. This is not a trivial data network. This also implies a lot of power at each node to receive such a stream and to process it in real time.

The new DW3000 series doesn’t change the basic math of the problem. They can go marginally faster with a shorter preamble, but otherwise have the same issues.

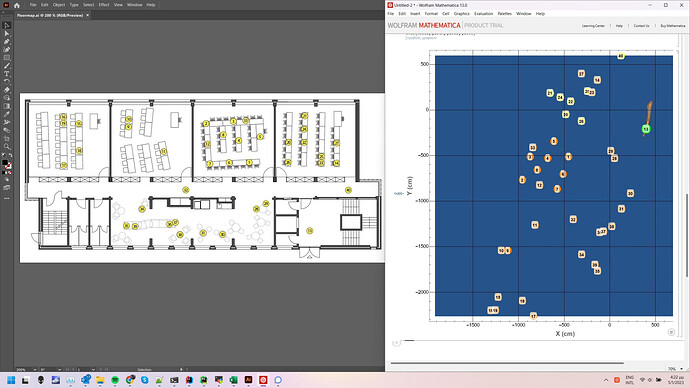

We do have an operating mode we call “schedule round robin multi range” (SRRMR). It has one anchor whose job is synchronization and control of the network, plus a PC to compute the distances between nodes. Each node does exactly as you describe, receives from all others and reports timestamps back to the central anchor. The PC then computes distances between nodes. This mode is often used in simulating nuclear spills for emergency drills, and was why we developed it for a client.

The issue is that SRRMR is quite capacity limited due to the O(N^2) issue at around 22 nodes or so (5 Hz I think). For the use case, that works. For you, it doesn’t.

What about carrier phase GPS receives on each person? You can have ~3 cm resolution. Does require one base station (or it can be post processed if you don’t need real time). Since this is outdoors, you have GPS, though you need a good view of the sky from each human. Carrier phase GPS is now a lot cheaper than it once was.

Trivial to do with an anchor array. Our systems have about 3400 locates per second capacity, so 200 nodes could be located at, say, 15 Hz easily. Higher beacon rates allow smoothing and improved accuracy, too.

There is another mode, what we call “nav mode”, where an anchor array transmits time codes like GPS satellites and the tags can receive this and compute their own position. In this mode, there is no limit on the number of tags since they only receive (just like GPS). But this doesn’t get you distances between nodes, only their location. You would still need some means to share these locations if you are ultimately interested in relative distances between nodes.

Mike C.